Designing Hierarchical Memory Systems for Long-Term Autonomy in AI Agents

Context is the new Moat!

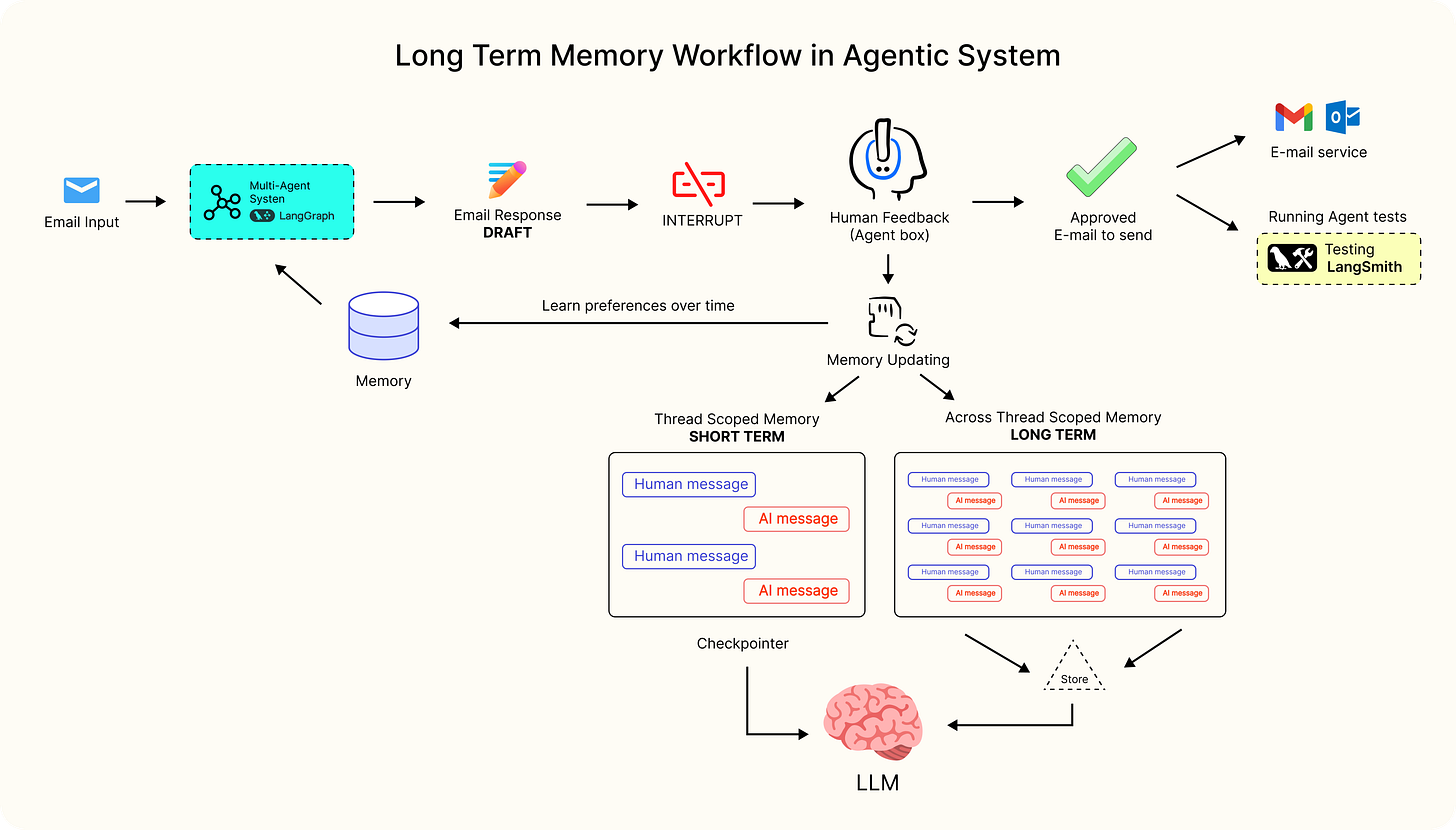

Today, AI agents are advancing every day. Built upon large language models (LLMs), today’s AI agents are dynamic, goal-oriented systems capable of autonomous operation. They can perform tasks such as planning, web browsing, writing code, and more. This performance requires remembering past interactions, which means AI agents rely heavily on internal memory to retain prior dialogue information.

However, this approach is limited by the short context window length of LLMs, making them ineffective for long-term interactions.

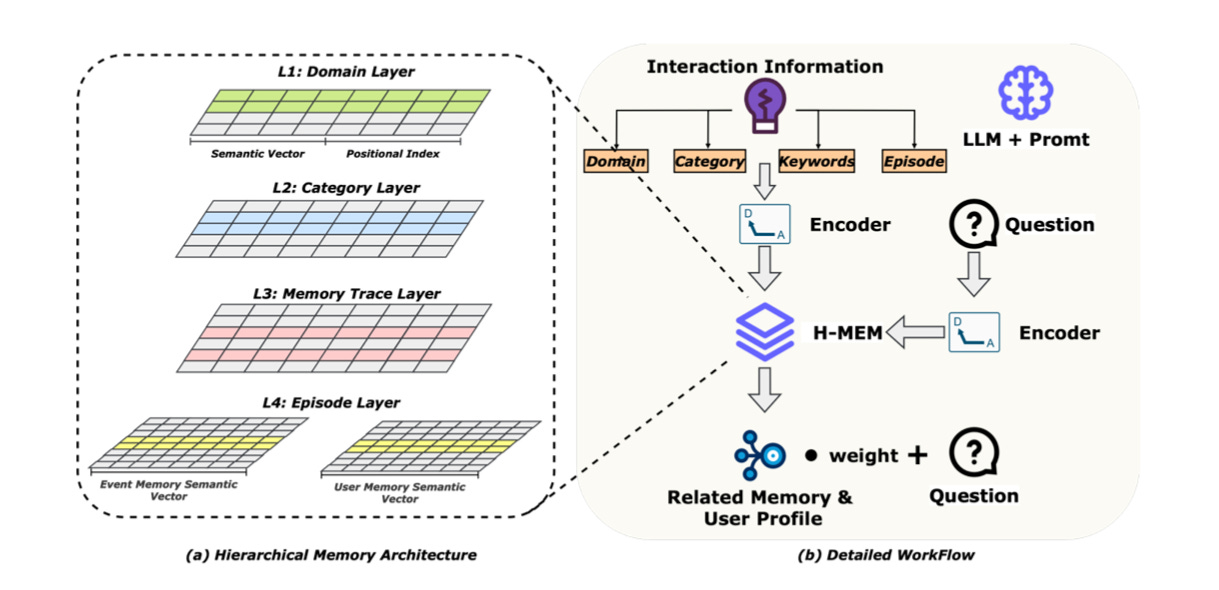

The Hierarchical Memory (H-MEM) architecture optimizes existing memory mechanisms of the AI agents for structured storage, systematic organization, and efficient retrieval. The architecture organizes information across four semantic levels and retrieves relevant items efficiently. H‑MEM indexes memories by semantic level, so the agent fetches only level-relevant entries. This cuts retrieval overhead and speeds access.

In this article, you will learn more about how hierarchical memory systems can help address weaknesses in the existing LLM models and facilitate long-term autonomy in AI agents.

Why Flat Retrieval-Augmented Memory Fails at Scale

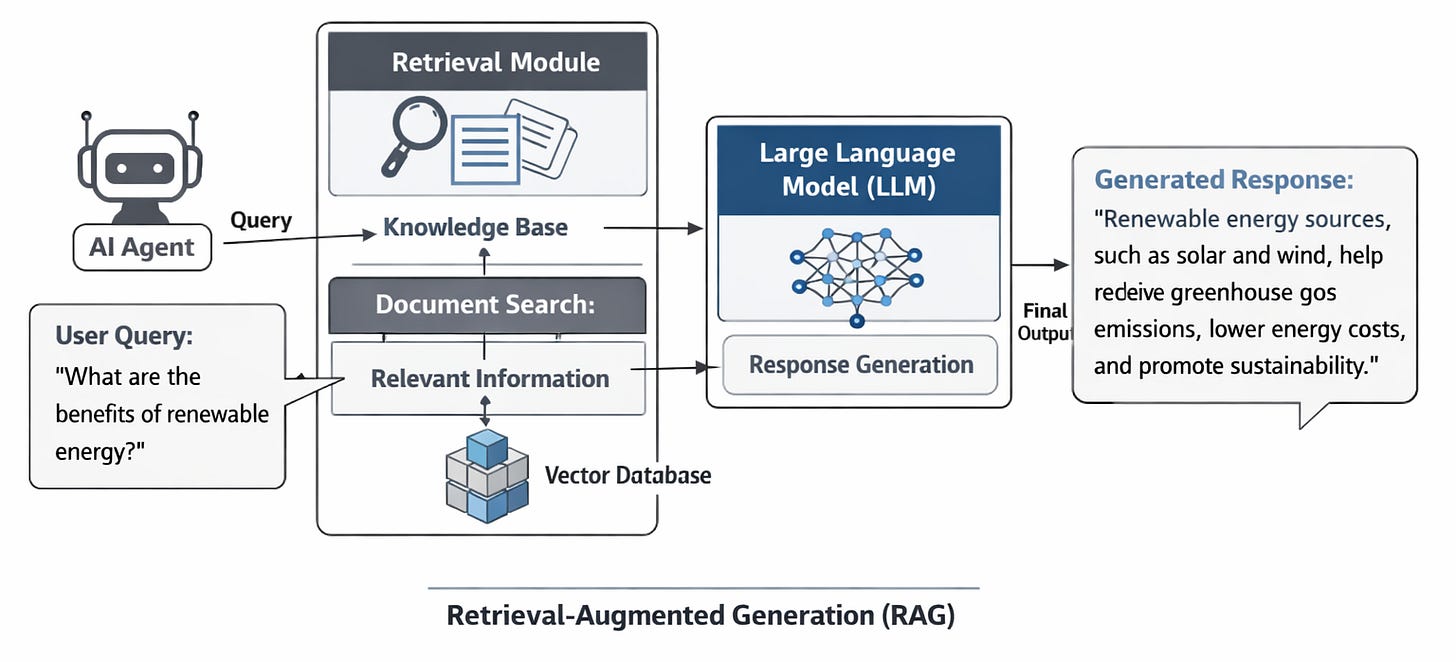

Today, retrieval-augmented generation (RAG) has become an industry standard for building AI agents. RAG is an AI architecture that optimizes the results of AI agents by connecting them with external authoritative knowledge bases outside their training data. So, AI tools can deliver more relevant, accurate, and up-to-date responses.

However, the challenge is that most RAG implementations treat memory as a flat embedding store. Each stored interaction is represented equivalently in vector space and indexed via similarity search. This framework has many scaling limitations, such as:

No abstraction hierarchy: Raw interaction logs coexist with generalized knowledge.

No temporal modeling: Lacks propagation over temporal edges, resulting in inferior performance on queries requiring context chaining

No procedural encoding: Successful action sequences are not stored as reusable skills.

Unbounded growth: Memory accumulation increases retrieval noise and latency.

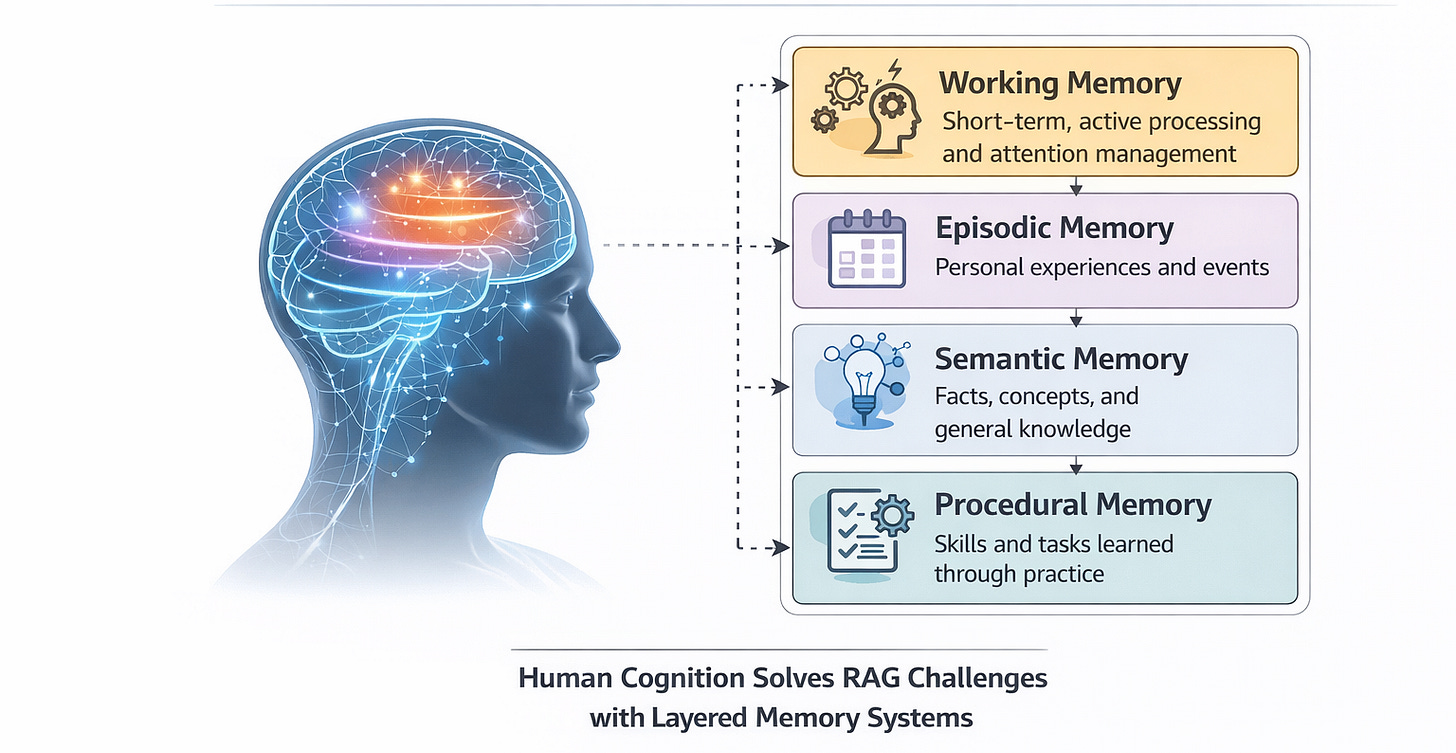

Human cognition solves this with layered memory systems. It is typically divided into:

Working memory

Episodic memory

Semantic memory

Procedural memory

These subsystems interact dynamically through consolidation processes. AI agents, which are computational rather than biological, require a similar hierarchy for computational efficiency and stability.

This is where the Hierarchical Memory System for AI agents enters.

A Hierarchical Model of AI Agent Memory

As shown in the figure above, storage in hierarchical memory systems is organized into four layers, each with increasing levels of semantic abstraction and generalization:

Domain Layer: Broad area identification, the highest abstraction, and knowledge organization by global context. Example: Finance, healthcare, and technology

Category Layer: Subdivides domains into conceptual groupings. Example: taxation, wealth management.

Memory Trace Layer: Generalized knowledge patterns, abstracted from relevant conversation threads over repeated experiences.

Episode Layer: Raw individual interaction logs

The first three layers store abstract domain summaries, while the bottom layer stores the complete contextual memory of the interaction. The episode layer also stores user profile information, including the user’s preferences, interests, emotional states, and behavioral patterns. All memory entries are encoded into dense vector representations using a neural encoder to support efficient semantic retrieval.

For every carefully designed prompt, the structured design of the H-MEM model routes the query layer by layer. This avoids exhaustive searches and maintains efficiency even as the memory scales substantially. It also ensures scalability for memory retrieval in long-term interactions, enabling AI agents to provide fine-grained reasoning.

Experimental Observations from Long-Horizon Agents

In experimental simulations involving multi-session task execution, such as persistent research assistants, long-term project planning agents, and iterative tool-based workflows, flat memory architectures exhibit predictable degradation. For instance, redundant reasoning increases over time, agents repeat failed strategies, and context windows fill with low-value historical noise.

Hierarchical memory systems meaningfully improve performance. Procedural memory reduces repeated planning overhead. Agents reapply successful execution graphs rather than regenerate them. Semantic consolidation improves cross-session consistency, especially in user modeling and constraint retention. Meta-memory pruning reduces token injection and limits retrieval noise.

The most striking effect is stability. Agents with layered memory systems show fewer regressions in goal tracking and significantly lower repetition of prior errors. Autonomy becomes cumulative rather than cyclical. Importantly, these benefits do not come from larger base models. They come from an architectural organization around memory.

Hierarchical Memory Design Trade-offs and Limitations

Hierarchical memory is not without risks and limitations.

Privacy and security concerns: H-MEM stores a large amount of user information, involving users’ privacy and sensitive data. So, the model requires effective privacy protection mechanisms to restrict access and prevent cyberthreats.

Over-aggressive abstraction. Summaries may remove nuance. Procedural compression can oversimplify context-specific actions. Long-term storage of incorrect knowledge can fossilize mistakes. This requires validation loops and memory auditing mechanisms for detecting contradictions.

Latency: Effective memory systems depend on proper engineering, together with early pruning techniques, caching mechanisms, and confidence thresholds that prevent bottlenecks

Limited support for multi-modal memory: The current H-MEM framework mainly focuses on text. But real-world interactions between AI agents and users involve multiple modalities, such as images, audio, and video. So, H-MEM needs to integrate multimodal information sources.

Limited memory capacity: Despite major improvements, H-MEM systems still have limited memory capacity. As the data volume increases, the existing system may gradually exhaust itself. So, this issue needs to be addressed.

Final Words

The industry conversation around AI autonomy often focuses on model scale, multimodality, or reasoning benchmarks. But true long-term autonomy requires persistence. AI agents that cannot remember, abstract, and/or consolidate are doomed to being obsolete and inefficient.

Hierarchical memory systems convert reactive LLMs into evolving cognitive systems. They support the accumulation of knowledge, create stable personalized experiences, autonomy through historical data, and demonstrate continuous intelligence.

Memory architecture is becoming as strategically significant as model architecture itself. A poor design will crumble autonomy beneath its own history, while a well-designed memory architecture will start to show something far closer to sustained intelligence.